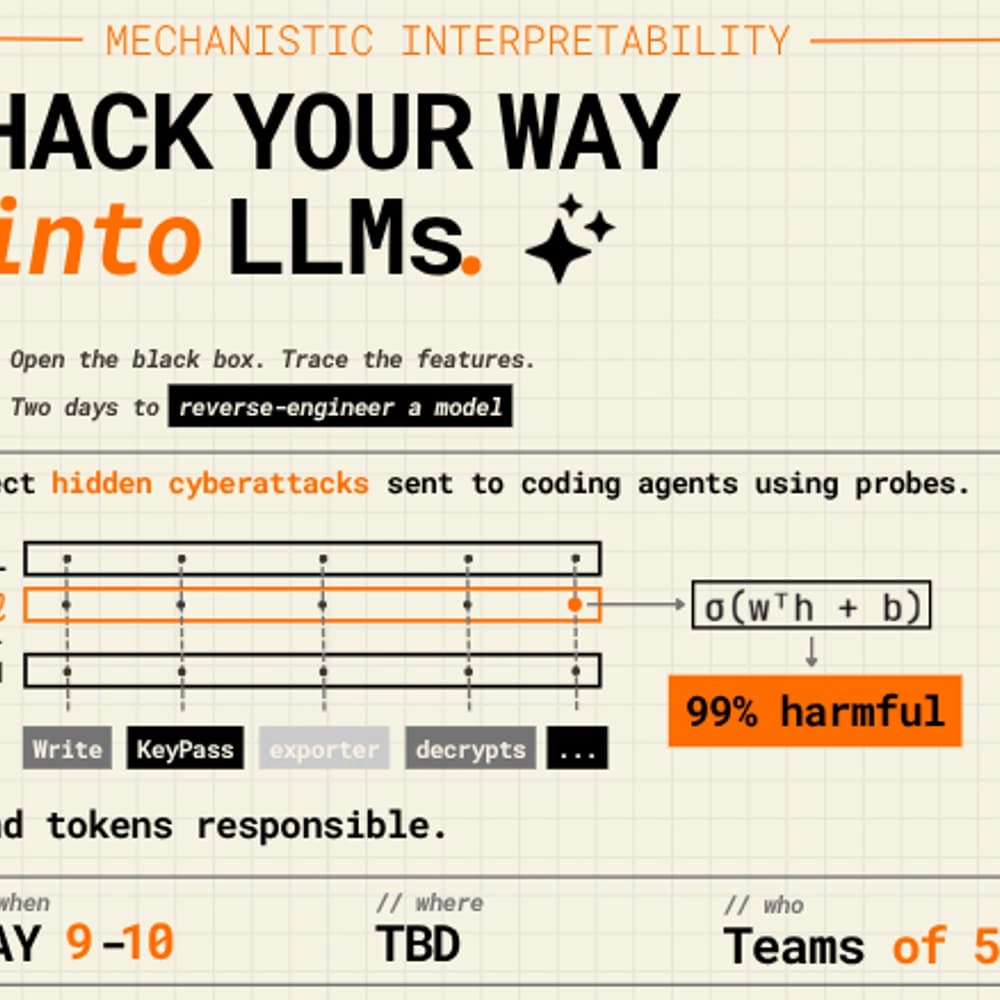

Hack your way into LLMs

Dive deep into the intriguing world of mechanistic interpretability at EPFL! 🚀 With SAIL hosting an engaging hackathon, you'll have the chance to explore a curated adversarial dataset with five innovative coding models. Your mission? Understand what's happening internally when these models face potentially harmful requests.

Two Tracks to Explore:

🔍 Probes as Monitors: Classify internal activations to detect harmful compliance before the model generates potentially risky outputs.

🎯 Attribution: Identify which specific inputs push a model toward supporting an attacker.

What’s Included:

- Claude Code Credits

- GPU Instances

- Mentorship

- Food throughout the weekend

This is your chance to achieve a publishable outcome in just 48 hours! 🏆

Prizes Awaiting:

💰 CHF 1,000

💰 CHF 500

💰 CHF 300

Bring your PyTorch fluency and curious mind focused on model internals — that's all you need to participate!

Important:

📝 Register by May 6th

📅 Event Date: May 9-10

👥 Team Size: Up to 5 members

Join us for a thrilling weekend of innovation and discovery!

NOTE : Nous ne pouvons pas garantir l'exactitude des informations fournies sur cet événement. Visitez le site web de l'événement pour vérifier les détails tels que la date, les horaires, les prix et le lieu.